TL;DR

If you just want to see the conclusion, skip to the Summary . Please note that the content discussed here is only guaranteed to work for version 0.3.2. Other versions have not been tested.

Introduction

GraphRAG is a new Retrieval-Augmented Generation (RAG) technology released by Microsoft. Unlike the usual RAG approach, which breaks text into chunks, vectorizes them, and stores them in a vector store, and then compares the similarity by vectorizing the user’s query, GraphRAG extracts information from text and builds a Knowledge Graph. This approach has the advantage of better understanding the complex relationships between different documents.

As of the time of writing, the latest official version is 0.3.2, and here is the GitHub repository link for version 0.3.2 . While the content discussed here might work for other versions, it is not guaranteed.

If you’ve read the official tutorial, you’ll notice that the config for version 0.3.2 only supports OpenAI and Azure OpenAI. You need to put your API Key in the config file to use it, which works and is the simplest approach. However, GraphRAG requires frequent LLM calls during indexing, which makes it costly, and we cannnot use our own custom fine-tuned model.

So, is it possible to use an open-source model running locally, such as Llama 3.1 ? The answer is yes, but you’ll need to overcome several challenges, as the official implementation doesn’t support local models, and you’ll need to modify some things manually.

You might wonder why not use LLMGraphTransformer from LangChain instead? I can only say that, as of now, it is still an experimental feature and differs slightly from Microsoft’s official implementation. If you’re interested, you can check out the following links:

- https://python.langchain.com/v0.2/docs/how_to/graph_constructing/

- https://python.langchain.com/v0.2/docs/tutorials/graph/

Prerequisites

- Install Ollama and have it running.

- An LLM model, such as

ollama pull llama3.1:8b. - A Text Embedding model, such as

ollama pull nomic-embed-text.

Troubleshooting Process

When I first opened the Get Started page, I was excited to see how short it was and thought I could get it working quickly. Looking back, I was overly optimistic…

The first few steps were straightforward and did not cause issues:

pip install graphrag

mkdir -p ./ragtest/input

curl https://www.gutenberg.org/cache/epub/24022/pg24022.txt > ./ragtest/input/book.txt

python -m graphrag.index --init --root ./ragtest

Next, I needed to edit settings.yaml, and that’s where the problems began. How should I configure the model?

First, delete .env because we don’t need the API key.

Then, open settings.yaml and input the following:

The differences compared to the original settings.yaml are:

llmapi_key: Set it to anything. It’s not important since we won’t be using an API. I set it toNONE.model: Set this to your local LLM model, such asllama3.1:8b.max_tokens: Set this to a number smaller than the maximum token limit for your LLM model.api_base: Set this tohttp://localhost:11434/v1.

embeddingsllmapi_key: Again, set it to anything since we won’t use the API. I set it toNONE.

model: Set this to your local Text Embedding model, such asnomic-embed-text.api_base: Set this tohttp://localhost:11434/api.batch_max_tokens: Set this to a number smaller than the maximum token limit for your Text Embedding model.

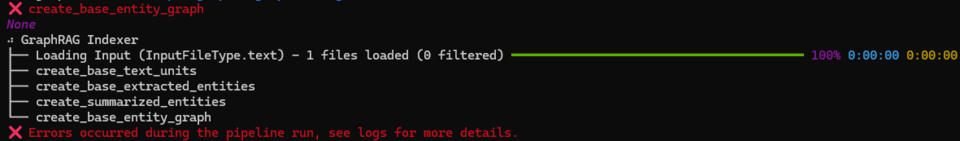

chunkssize: Lower this to 300. If not adjusted, you’ll encounter the following error. More details in the related issue: https://github.com/microsoft/graphrag/issues/362

09:44:07,997 datashaper.workflow.workflow ERROR Error executing verb "cluster_graph" in create_base_entity_graph: Columns must be same length as key Traceback (most recent call last): File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/datashaper/workflow/workflow.py", line 410, in _execute_verb result = node.verb.func(**verb_args) ^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/graph/clustering/cluster_graph.py", line 102, in cluster_graph output_df[[level_to, to]] = pd.DataFrame( ~~~~~~~~~^^^^^^^^^^^^^^^^ File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/pandas/core/frame.py", line 4299, in __setitem__ self._setitem_array(key, value) File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/pandas/core/frame.py", line 4341, in _setitem_array check_key_length(self.columns, key, value) File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/pandas/core/indexers/utils.py", line 390, in check_key_length raise ValueError("Columns must be same length as key") ValueError: Columns must be same length as key 16:25:46,215 graphrag.index.reporting.file_workflow_callbacks INFO Error executing verb "cluster_graph" in create_base_entity_graph: Columns must be same length as key details=None 16:25:46,216 graphrag.index.run ERROR error running workflow create_base_entity_graph Traceback (most recent call last): File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/run.py", line 325, in run_pipeline result = await workflow.run(context, callbacks) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/datashaper/workflow/workflow.py", line 369, in run timing = await self._execute_verb(node, context, callbacks) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/datashaper/workflow/workflow.py", line 410, in _execute_verb result = node.verb.func(**verb_args) ^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/graph/clustering/cluster_graph.py", line 102, in cluster_graph output_df[[level_to, to]] = pd.DataFrame( ~~~~~~~~~^^^^^^^^^^^^^^^^ File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/pandas/core/frame.py", line 4299, in __setitem__ self._setitem_array(key, value) File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/pandas/core/frame.py", line 4341, in _setitem_array check_key_length(self.columns, key, value) File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/pandas/core/indexers/utils.py", line 390, in check_key_length raise ValueError("Columns must be same length as key") ValueError: Columns must be same length as key

After changing the settings, excitedly run python -m graphrag.index --init --root ./ragtest, and you’ll get the following error:

09:19:54,508 datashaper.workflow.workflow ERROR Error executing verb "text_embed" in create_final_entities: 'NoneType' object is not iterable

Traceback (most recent call last):

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/datashaper/workflow/workflow.py", line 415, in _execute_verb

result = await result

^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/text/embed/text_embed.py", line 105, in text_embed

return await _text_embed_in_memory(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/text/embed/text_embed.py", line 130, in _text_embed_in_memory

result = await strategy_exec(texts, callbacks, cache, strategy_args)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/text/embed/strategies/openai.py", line 62, in run

embeddings = await _execute(llm, text_batches, ticker, semaphore)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/text/embed/strategies/openai.py", line 106, in _execute

results = await asyncio.gather(*futures)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/text/embed/strategies/openai.py", line 100, in embed

chunk_embeddings = await llm(chunk)

^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/caching_llm.py", line 96, in __call__

result = await self._delegate(input, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/rate_limiting_llm.py", line 177, in __call__

result, start = await execute_with_retry()

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/rate_limiting_llm.py", line 159, in execute_with_retry

async for attempt in retryer:

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/tenacity/asyncio/__init__.py", line 166, in __anext__

do = await self.iter(retry_state=self._retry_state)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/tenacity/asyncio/__init__.py", line 153, in iter

result = await action(retry_state)

^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/tenacity/_utils.py", line 99, in inner

return call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/tenacity/__init__.py", line 398, in <lambda>

self._add_action_func(lambda rs: rs.outcome.result())

^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/concurrent/futures/_base.py", line 449, in result

return self.__get_result()

^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/concurrent/futures/_base.py", line 401, in __get_result

raise self._exception

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/rate_limiting_llm.py", line 165, in execute_with_retry

return await do_attempt(), start

^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/rate_limiting_llm.py", line 147, in do_attempt

return await self._delegate(input, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/base_llm.py", line 49, in __call__

return await self._invoke(input, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/base_llm.py", line 53, in _invoke

output = await self._execute_llm(input, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/openai/openai_embeddings_llm.py", line 36, in _execute_llm

embedding = await self.client.embeddings.create(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/resources/embeddings.py", line 237, in create

return await self._post(

^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/_base_client.py", line 1816, in post

return await self.request(cast_to, opts, stream=stream, stream_cls=stream_cls)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/_base_client.py", line 1510, in request

return await self._request(

^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/_base_client.py", line 1613, in _request

return await self._process_response(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/_base_client.py", line 1710, in _process_response

return await api_response.parse()

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/_response.py", line 420, in parse

parsed = self._options.post_parser(parsed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/resources/embeddings.py", line 225, in parser

for embedding in obj.data:

TypeError: 'NoneType' object is not iterable

09:19:54,533 graphrag.index.reporting.file_workflow_callbacks INFO Error executing verb "text_embed" in create_final_entities: 'NoneType' object is not iterable details=None

09:19:54,541 graphrag.index.run ERROR error running workflow create_final_entities

Traceback (most recent call last):

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/run.py", line 325, in run_pipeline

result = await workflow.run(context, callbacks)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/datashaper/workflow/workflow.py", line 369, in run

timing = await self._execute_verb(node, context, callbacks)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/datashaper/workflow/workflow.py", line 415, in _execute_verb

result = await result

^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/text/embed/text_embed.py", line 105, in text_embed

return await _text_embed_in_memory(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/text/embed/text_embed.py", line 130, in _text_embed_in_memory

result = await strategy_exec(texts, callbacks, cache, strategy_args)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/text/embed/strategies/openai.py", line 62, in run

embeddings = await _execute(llm, text_batches, ticker, semaphore)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/text/embed/strategies/openai.py", line 106, in _execute

results = await asyncio.gather(*futures)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/index/verbs/text/embed/strategies/openai.py", line 100, in embed

chunk_embeddings = await llm(chunk)

^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/caching_llm.py", line 96, in __call__

result = await self._delegate(input, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/rate_limiting_llm.py", line 177, in __call__

result, start = await execute_with_retry()

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/rate_limiting_llm.py", line 159, in execute_with_retry

async for attempt in retryer:

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/tenacity/asyncio/__init__.py", line 166, in __anext__

do = await self.iter(retry_state=self._retry_state)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/tenacity/asyncio/__init__.py", line 153, in iter

result = await action(retry_state)

^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/tenacity/_utils.py", line 99, in inner

return call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/tenacity/__init__.py", line 398, in <lambda>

self._add_action_func(lambda rs: rs.outcome.result())

^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/concurrent/futures/_base.py", line 449, in result

return self.__get_result()

^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/concurrent/futures/_base.py", line 401, in __get_result

raise self._exception

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/rate_limiting_llm.py", line 165, in execute_with_retry

return await do_attempt(), start

^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/rate_limiting_llm.py", line 147, in do_attempt

return await self._delegate(input, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/base_llm.py", line 49, in __call__

return await self._invoke(input, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/base/base_llm.py", line 53, in _invoke

output = await self._execute_llm(input, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/openai/openai_embeddings_llm.py", line 36, in _execute_llm

embedding = await self.client.embeddings.create(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/resources/embeddings.py", line 237, in create

return await self._post(

^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/_base_client.py", line 1816, in post

return await self.request(cast_to, opts, stream=stream, stream_cls=stream_cls)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/_base_client.py", line 1510, in request

return await self._request(

^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/_base_client.py", line 1613, in _request

return await self._process_response(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/_base_client.py", line 1710, in _process_response

return await api_response.parse()

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/_response.py", line 420, in parse

parsed = self._options.post_parser(parsed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/openai/resources/embeddings.py", line 225, in parser

for embedding in obj.data:

TypeError: 'NoneType' object is not iterable

The solution comes from this GitHub comment .

The comment suggests modifying a file’s content, but I don’t think that’s a good idea, as it might cause issues when using the package’s original functionality later. So, I opted for an external monkey patch approach. First, create a file called monkey_patch.py and input the following content:

| |

Make sure to install ollama by running pip install ollama.

We download the graphrag.index CLI content and monkey-patch it.

First, run

wget https://raw.githubusercontent.com/microsoft/graphrag/v0.3.2/graphrag/index/__main__.py -O index.py

Then, replace line 8 of index.py:

| |

with the following:

| |

Now, run python index.py --root ./ragtest. Finally, no more errors!

Try executing a Global Query:

python -m graphrag.query \

--root ./ragtest \

--method global \

"What are the top themes in this story?"

This error occurs:

Traceback (most recent call last):

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/openai/utils.py", line 130, in try_parse_json_object

result = json.loads(input)

^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 337, in decode

obj, end = self.raw_decode(s, idx=_w(s, 0).end())

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 355, in raw_decode

raise JSONDecodeError("Expecting value", s, err.value) from None

json.decoder.JSONDecodeError: Expecting value: line 1 column 1 (char 0)

not expected dict type. type=<class 'str'>:

Traceback (most recent call last):

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/openai/utils.py", line 130, in try_parse_json_object

result = json.loads(input)

^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 337, in decode

obj, end = self.raw_decode(s, idx=_w(s, 0).end())

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 355, in raw_decode

raise JSONDecodeError("Expecting value", s, err.value) from None

json.decoder.JSONDecodeError: Expecting value: line 1 column 1 (char 0)

not expected dict type. type=<class 'str'>:

Traceback (most recent call last):

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/openai/utils.py", line 130, in try_parse_json_object

result = json.loads(input)

^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 337, in decode

obj, end = self.raw_decode(s, idx=_w(s, 0).end())

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 355, in raw_decode

raise JSONDecodeError("Expecting value", s, err.value) from None

json.decoder.JSONDecodeError: Expecting value: line 1 column 1 (char 0)

not expected dict type. type=<class 'str'>:

Traceback (most recent call last):

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/openai/utils.py", line 130, in try_parse_json_object

result = json.loads(input)

^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 337, in decode

obj, end = self.raw_decode(s, idx=_w(s, 0).end())

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 355, in raw_decode

raise JSONDecodeError("Expecting value", s, err.value) from None

json.decoder.JSONDecodeError: Expecting value: line 1 column 1 (char 0)

not expected dict type. type=<class 'str'>:

Traceback (most recent call last):

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/openai/utils.py", line 130, in try_parse_json_object

result = json.loads(input)

^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 337, in decode

obj, end = self.raw_decode(s, idx=_w(s, 0).end())

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 355, in raw_decode

raise JSONDecodeError("Expecting value", s, err.value) from None

json.decoder.JSONDecodeError: Expecting value: line 1 column 1 (char 0)

not expected dict type. type=<class 'str'>:

Traceback (most recent call last):

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/llm/openai/utils.py", line 130, in try_parse_json_object

result = json.loads(input)

^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 337, in decode

obj, end = self.raw_decode(s, idx=_w(s, 0).end())

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/json/decoder.py", line 355, in raw_decode

raise JSONDecodeError("Expecting value", s, err.value) from None

json.decoder.JSONDecodeError: Expecting value: line 1 column 1 (char 0)

Try executing a Local Query:

python -m graphrag.query \

--root ./ragtest \

--method local \

"Who is Scrooge, and what are his main relationships?"

This error occurs:

Error embedding chunk {'OpenAIEmbedding': "'NoneType' object is not iterable"}

Traceback (most recent call last):

File "<frozen runpy>", line 198, in _run_module_as_main

File "<frozen runpy>", line 88, in _run_code

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/__main__.py", line 92, in <module>

run_local_search(

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/cli.py", line 164, in run_local_search

response, context_data = asyncio.run(

^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/asyncio/runners.py", line 190, in run

return runner.run(main)

^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/asyncio/runners.py", line 118, in run

return self._loop.run_until_complete(task)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/asyncio/base_events.py", line 654, in run_until_complete

return future.result()

^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/api.py", line 222, in local_search

result: SearchResult = await search_engine.asearch(query=query)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/structured_search/local_search/search.py", line 67, in asearch

context_text, context_records = self.context_builder.build_context(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/structured_search/local_search/mixed_context.py", line 139, in build_context

selected_entities = map_query_to_entities(

^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/context_builder/entity_extraction.py", line 55, in map_query_to_entities

search_results = text_embedding_vectorstore.similarity_search_by_text(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/vector_stores/lancedb.py", line 118, in similarity_search_by_text

query_embedding = text_embedder(text)

^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/context_builder/entity_extraction.py", line 57, in <lambda>

text_embedder=lambda t: text_embedder.embed(t),

^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/llm/oai/embedding.py", line 96, in embed

chunk_embeddings = np.average(chunk_embeddings, axis=0, weights=chunk_lens)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/numpy/lib/function_base.py", line 550, in average

raise ZeroDivisionError(

ZeroDivisionError: Weights sum to zero, can't be normalized

So, I had to monkey-patch the query CLI as well. Related issues and comments:

- https://github.com/microsoft/graphrag/issues/345#issuecomment-2230317752

- https://github.com/microsoft/graphrag/issues/575#issuecomment-2252045859

Add the following two functions to monkey_patch.py:

| |

Run

wget https://raw.githubusercontent.com/microsoft/graphrag/v0.3.2/graphrag/query/__main__.py -O query.py

Replace line 9 of query.py:

| |

with the following:

| |

Then, run the Global and Local Search queries. Local Search looks good, while Global Search still needs parameter adjustments. But at least there are no more errors! Hooray!

$ python query.py --root ./ragtest --method global "What are the top themes in this story?"

INFO: Reading settings from ragtest/settings.yaml

/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/indexer_adapters.py:71: SettingWithCopyWarning:

A value is trying to be set on a copy of a slice from a DataFrame.

Try using .loc[row_indexer,col_indexer] = value instead

See the caveats in the documentation: https://pandas.pydata.org/pandas-docs/stable/user_guide/indexing.html#returning-a-view-versus-a-copy

entity_df["community"] = entity_df["community"].fillna(-1)

/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/indexer_adapters.py:72: SettingWithCopyWarning:

A value is trying to be set on a copy of a slice from a DataFrame.

Try using .loc[row_indexer,col_indexer] = value instead

See the caveats in the documentation: https://pandas.pydata.org/pandas-docs/stable/user_guide/indexing.html#returning-a-view-versus-a-copy

entity_df["community"] = entity_df["community"].astype(int)

creating llm client with {'api_key': 'REDACTED,len=4', 'type': "openai_chat", 'model': 'llama3.1:8b', 'max_tokens': 8191, 'temperature': 0.0, 'top_p': 1.0, 'n': 1, 'request_timeout': 180.0, 'api_base': 'http://localhost:11434/v1', 'api_version': None, 'organization': None, 'proxy': None, 'cognitive_services_endpoint': None, 'deployment_name': None, 'model_supports_json': True, 'tokens_per_minute': 0, 'requests_per_minute': 0, 'max_retries': 10, 'max_retry_wait': 10.0, 'sleep_on_rate_limit_recommendation': True, 'concurrent_requests': 25}

SUCCESS: Global Search Response:

You didn't provide a story. Please share the text, and I'll be happy to help you identify the top themes.

$ python query.py --root ./ragtest --method local "Who is Scrooge, and what are his main relationships?"

INFO: Reading settings from ragtest/settings.yaml

INFO: Vector Store Args: {}

/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/indexer_adapters.py:71: SettingWithCopyWarning:

A value is trying to be set on a copy of a slice from a DataFrame.

Try using .loc[row_indexer,col_indexer] = value instead

See the caveats in the documentation: https://pandas.pydata.org/pandas-docs/stable/user_guide/indexing.html#returning-a-view-versus-a-copy

entity_df["community"] = entity_df["community"].fillna(-1)

/home/chishengliu/miniforge3/envs/graphrag/lib/python3.11/site-packages/graphrag/query/indexer_adapters.py:72: SettingWithCopyWarning:

A value is trying to be set on a copy of a slice from a DataFrame.

Try using .loc[row_indexer,col_indexer] = value instead

See the caveats in the documentation: https://pandas.pydata.org/pandas-docs/stable/user_guide/indexing.html#returning-a-view-versus-a-copy

entity_df["community"] = entity_df["community"].astype(int)

creating llm client with {'api_key': 'REDACTED,len=4', 'type': "openai_chat", 'model': 'llama3.1:8b', 'max_tokens': 8191, 'temperature': 0.0, 'top_p': 1.0, 'n': 1, 'request_timeout': 180.0, 'api_base': 'http://localhost:11434/v1', 'api_version': None, 'organization': None, 'proxy': None, 'cognitive_services_endpoint': None, 'deployment_name': None, 'model_supports_json': True, 'tokens_per_minute': 0, 'requests_per_minute': 0, 'max_retries': 10, 'max_retry_wait': 10.0, 'sleep_on_rate_limit_recommendation': True, 'concurrent_requests': 25}

creating embedding llm client with {'api_key': 'REDACTED,len=4', 'type': "openai_embedding", 'model': 'nomic-embed-text', 'max_tokens': 4000, 'temperature': 0, 'top_p': 1, 'n': 1, 'request_timeout': 180.0, 'api_base': 'http://localhost:11434/api', 'api_version': None, 'organization': None, 'proxy': None, 'cognitive_services_endpoint': None, 'deployment_name': None, 'model_supports_json': None, 'tokens_per_minute': 0, 'requests_per_minute': 0, 'max_retries': 10, 'max_retry_wait': 10.0, 'sleep_on_rate_limit_recommendation': True, 'concurrent_requests': 25}

SUCCESS: Local Search Response:

A classic character!

Ebenezer Scrooge is the main protagonist of Charles Dickens' novella "A Christmas Carol". He is a miserly and bitter old man who lives in London during the Victorian era. Scrooge is known for his extreme love of money, his disdain for charity and kindness, and his general grumpiness.

Scrooge's main relationships are:

1. **Jacob Marley**: The ghost of his deceased business partner, who appears to Scrooge on Christmas Eve to warn him about the consequences of his selfish ways.

2. **Bob Cratchit**: His underpaid and overworked clerk, who is struggling to provide for his large family during the holiday season. Scrooge's treatment of Bob is particularly cruel, as he pays him a meager salary and expects him to work long hours without complaint.

3. **Tiny Tim**: The youngest son of Bob Cratchit, who suffers from illness and poverty. Scrooge's heartlessness towards Tiny Tim serves as a catalyst for his transformation in the story.

4. **His nephew, Fred**: A kind and generous young man who invites Scrooge to join him for Christmas dinner, but is rebuffed by his miserly uncle.

5. **The Ghosts of Christmas Past, Present, and Yet to Come**: Three supernatural visitations that haunt Scrooge on Christmas Eve, forcing him to confront the consequences of his actions and the possibility of a better future.

Through these relationships, Dickens explores themes of redemption, kindness, and the importance of human connection in "A Christmas Carol".

Summary

All the code from this article is in this Gist . Here’s a quick summary of the steps:

- Follow the official Get Started guide and run

python -m graphrag.index --init --root ./ragtest. - Replace

settings.yamlwith the one in the Gist. - Run

wget https://raw.githubusercontent.com/microsoft/graphrag/v0.3.2/graphrag/index/__main__.py -O index.py - Run

wget https://raw.githubusercontent.com/microsoft/graphrag/v0.3.2/graphrag/query/__main__.py -O query.py - Download the

graphrag_monkey_patch.pyfrom the Gist. - Replace line 8 of

index.pywith:1 2 3from graphrag.index.cli import index_cli from graphrag_monkey_patch import patch_graphrag patch_graphrag() - Replace line 9 of

query.pywith:1 2 3from graphrag.query.cli import run_global_search, run_local_search from graphrag_monkey_patch import patch_graphrag patch_graphrag() - Whenever you need to use

python -m graphrag.index, switch to usingpython index.pyinstead. Similarly, forpython -m graphrag.query, switch topython query.py.

and [Ollama](https://github.com/ollama/ollama/issues/2152)](https://static.chishengliu.com/posts/graphrag-local-ollama/cover/cover.png)